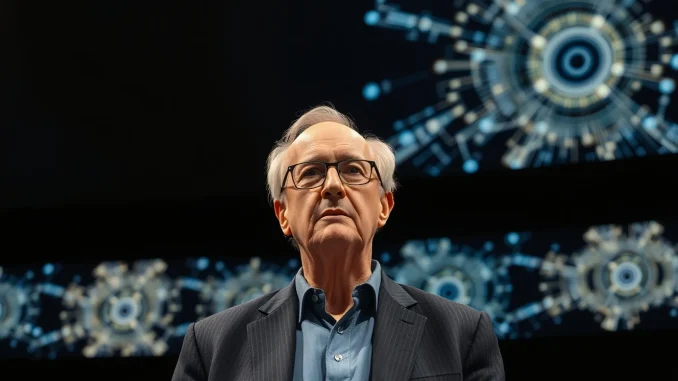

Billionaire media mogul Barry Diller does not believe OpenAI CEO Sam Altman is untrustworthy, despite recent reporting that has painted the AI executive in a less favorable light. Speaking on stage at The Wall Street Journal’s ‘Future of Everything’ conference this week, Diller vouched for Altman, who has faced accusations from former colleagues and board members of being manipulative and deceptive. However, Diller argued that the debate over individual character may miss the larger point entirely.

Trust vs. the Unknown

Diller, a co-founder of Fox Broadcasting and chairman of IAC and Expedia Group, was responding to a question about whether the public should place faith in Altman to ensure artificial intelligence benefits humanity. The question centered on the theoretical form of AI known as Artificial General Intelligence, or AGI, which could one day outperform humans on any task. Diller said that while he believes Altman is sincere, that is not the area of concern people should focus on.

Also read: Medicare’s quiet bet on AI: A new payment model that most of tech hasn’t noticed

‘One of the big issues with AI is it goes way beyond trust,’ Diller said. ‘It may be that trust is irrelevant because the things that are happening are a surprise to the people who are making those things happen.’ He added that he has spent time with people in the creation mode of AI, and they themselves have ‘a sense of wonder.’ Diller described the trajectory of AI as ‘the great unknown,’ noting that neither the public nor the creators fully understand where it is heading.

AGI and the Need for Guardrails

Diller warned that the core issue is not the stewardship of AI leaders but the unpredictable nature of AGI. ‘They don’t know what can happen once you get AGI, and we’re close to it,’ he said. ‘We’re not there yet, but we’re getting closer and closer, quicker and quicker. And we must think about guardrails.’

Also read: Altman testifies Musk once proposed handing OpenAI to his children during safety dispute

He cautioned that if humans fail to establish those guardrails, the alternative could be dire: ‘Another force, an AGI force, will do it themselves. And once that happens, once you unleash that, there’s no going back.’ Diller’s remarks reflect a growing sentiment among tech observers and industry insiders that the pace of AI development may outstrip the ability of even its creators to control or predict outcomes.

What This Means for the AI Industry

Diller’s comments come at a time when trust in AI leadership is under scrutiny. Altman has faced criticism from some former associates, yet Diller, who is friendly with Altman, described him as ‘a decent person with good values.’ Still, Diller declined to name which AI leaders he considers insincere, leaving that question open. His broader point is that the conversation around AI must shift from personal trust to systemic preparedness. As AGI edges closer to reality, the need for transparent governance and strong safety measures becomes more urgent.

Conclusion

Barry Diller’s remarks at the WSJ conference serve as a reminder that the AI debate is evolving. While trust in individual leaders like Sam Altman matters, the more pressing concern is the unpredictable nature of AGI itself. Diller’s call for guardrails echoes a wider industry conversation about how to manage a technology that could fundamentally reshape society. For now, the message is clear: trust is not enough.

FAQs

Q1: What is AGI?

AGI, or Artificial General Intelligence, is a theoretical form of AI that could perform any intellectual task that a human can do. Unlike current narrow AI, which is designed for specific tasks, AGI would possess general reasoning and learning abilities.

Q2: Why did Barry Diller say trust is irrelevant?

Diller argued that the unpredictable nature of AGI means that even well-intentioned leaders like Sam Altman cannot fully anticipate the consequences of their creations. Trust in individuals, he said, does not address the fundamental uncertainty of the technology.

Q3: What are guardrails in the context of AI?

Guardrails refer to safety measures, ethical guidelines, and regulatory frameworks designed to prevent AI from causing unintended harm. Diller emphasized the need for such measures before AGI becomes a reality.

Be the first to comment